Introduction

Software engineering teams use automated generation tools to speed up their product delivery cycles. Developers have relied on code completion assistants and syntax generators for years, because these utilities help them write boilerplate logic faster and reduce repetitive typing tasks. Today, companies deploy large generation engines across their entire engineering departments. They expect these tools to replace human developers, lower operational costs, and increase their output. However, many engineering leaders struggle during this transition because they encounter severe verification overhead and declining code quality. As a result, only 29% of developers trust the accuracy of these systems, and this forces teams to debug errors rather than write original code. Artificial intelligence in software development introduces complex hidden costs if organizations fail to implement strict quality controls. If engineering teams ignore these quality pipelines, they accumulate large technical debt that eventually halts feature development. We will explain how to integrate these generation engines into engineering workflows and maintain product stability.

Instant Productivity Illusion

Engineering teams integrate these generation engines into their workflows, and they often expect instant results when they adopt ai development tools. Because these engines generate syntax instantly, managers assume projects will finish faster. However, faster task completion frequently creates severe review bottlenecks. According to Faros AI, teams with high adoption rates complete 21% more tasks, but their review times increase 91%. This productivity paradox happens because automated systems produce code that looks accurate at first glance but contains subtle logical errors.

Developers remain vigilant when they inspect this generated code. A 2025 Stack Overflow survey found that 66% of developers feel frustrated by automated solutions that provide outputs that are almost right but not quite accurate. These developers spend more time to fix these subtle mistakes than they would spend to write the original code manually. The execution speed of automation scales to near zero, but human verification remains bounded by cognition. This fundamental limit creates a permanent verification bottleneck that drains engineering resources.

Software architects require precise methods to review generated outputs. If engineers blindly accept boilerplate logic, they introduce structural flaws into the product. This dynamic resembles how organizations approach content creation for non-profits, where human editors verify every detail before publication. Teams prevent verification overhead only when they allocate sufficient time for manual code review and treat automated suggestions as rough drafts rather than finished features.

Artificial Intelligence In Software Development Safety

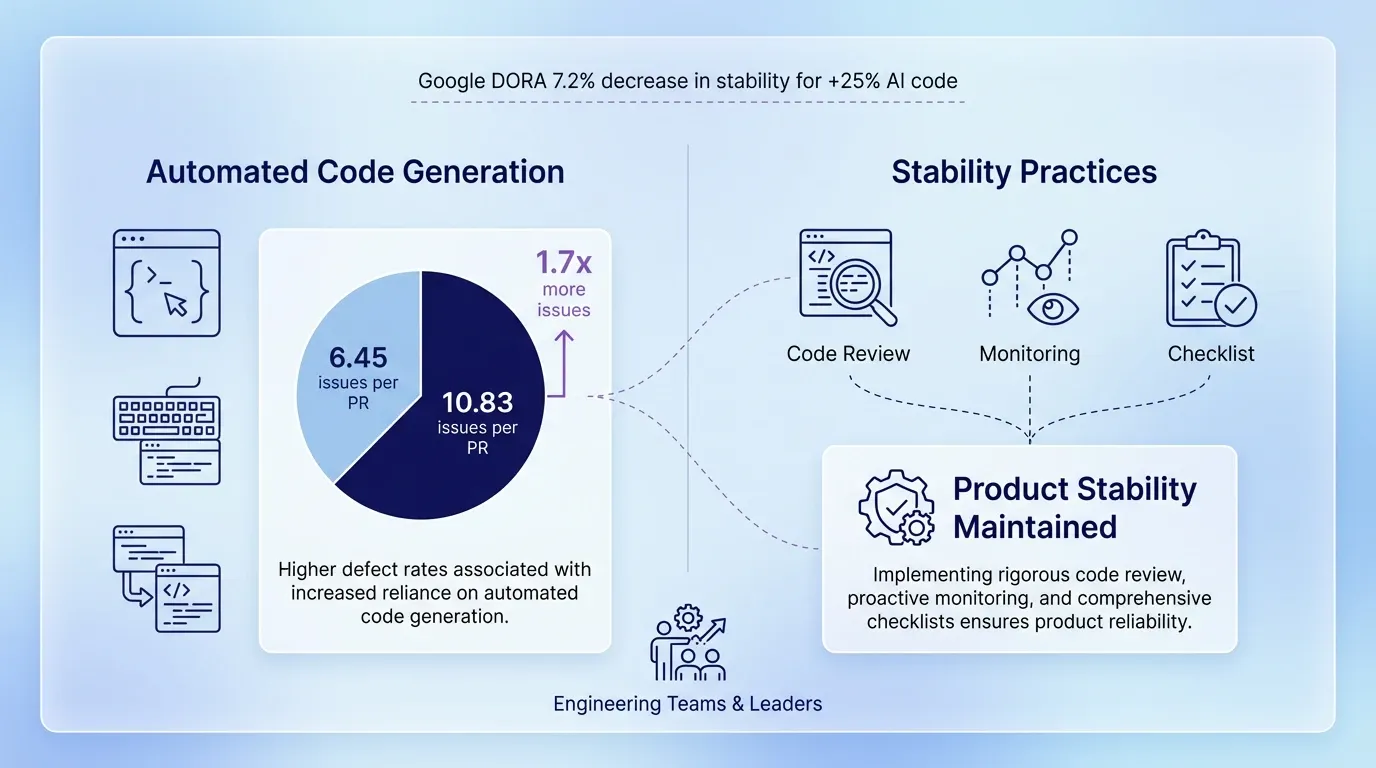

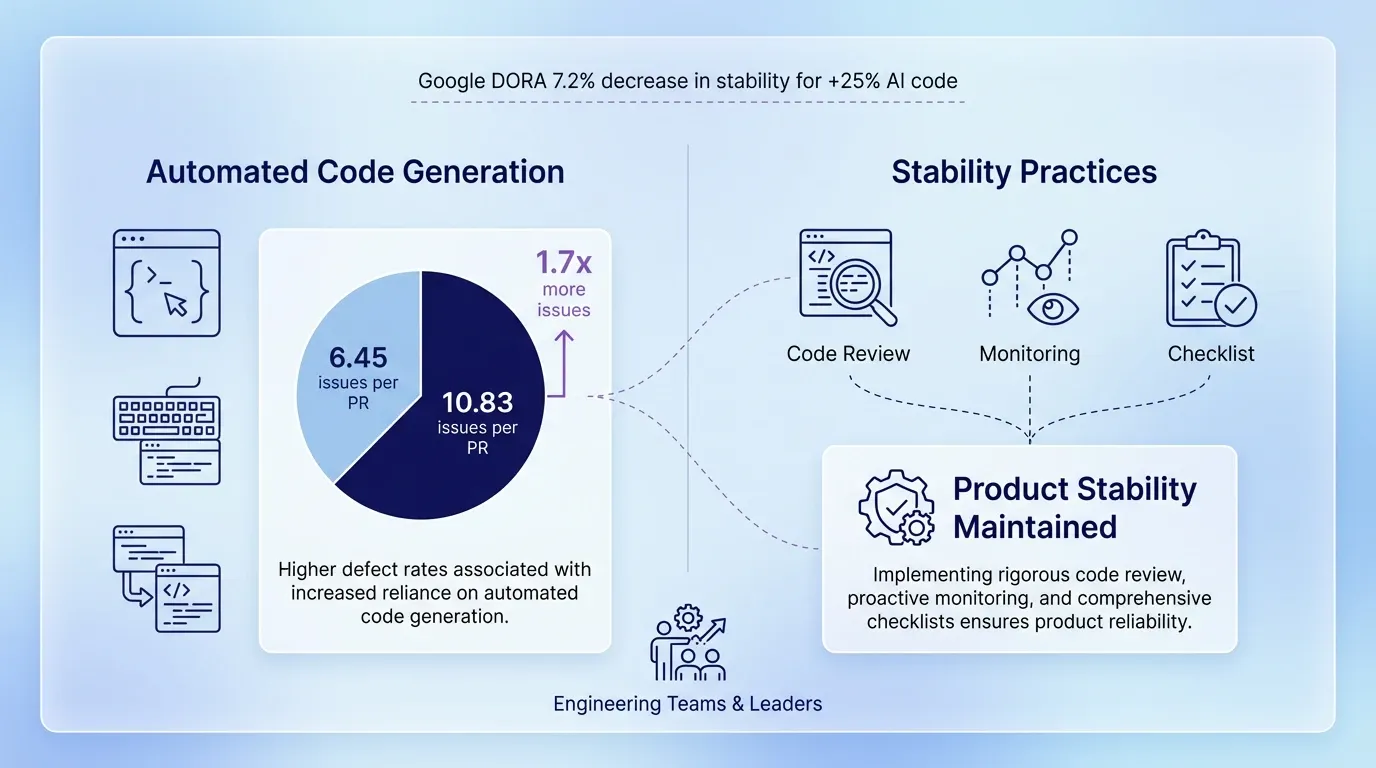

Organizations treat automated suggestions as rough drafts, and they treat quality engineering as a strategic function to implement artificial intelligence in software development safely. Increased reliance on automated generation directly correlates with decreased system reliability. The DevOps Research and Assessment team at Google discovered that a 25% increase in automated coding decreases delivery stability by 7.2%. This decrease happens because generation engines prioritize volume over architectural integrity.

Without disciplined oversight, automated engines inject numerous defects into the main codebase. According to CodeRabbit, automatically generated code contains 1.7 times more issues overall, and it averages 10.83 issues per pull request compared to 6.45 issues for human code. Engineering departments can't sustain this error rate unless they completely halt feature development.

To prevent architectural degradation, engineering leaders implement strict structural changes across their development pipelines. They establish specific quality gates that evaluate every automated contribution. Teams achieve production-level stability when they adopt the following quality engineering practices:

-

Track the defect rates of automated suggestions separately from human contributions.

-

Require senior developers to review complex business logic that machines generate.

-

Reject pull requests that lack adequate unit tests for automated syntax.

-

Monitor the codebase for duplicated functions that machines frequently generate.

When organizations enforce these quality practices, they capture the speed benefits of automation and maintain product stability.

Automated Programming Systems Management

Engineering teams maintain this product stability and prevent architectural drift when they establish structured verification pipelines for intelligent programming systems. These automated generators often suggest outdated libraries or ignore established design patterns. If developers accept these suggestions without verification, the codebase quickly becomes a disjointed collection of incompatible functions.

To manage intelligent programming systems effectively, organizations integrate automated checks directly into their continuous integration and continuous deployment pipelines. Before they design these pipelines, engineering teams must also decide how to deploy the underlying inference models. Organizations that evaluate the architectural tradeoffs between local and cloud AI deployments build more resilient pipelines because they understand the latency, compliance, and cost constraints of each approach.These checks scan every new commit for security vulnerabilities and architectural compliance. Static analysis tools automatically flag functions that violate the company's coding standards. When senior engineers rely on these automated gates, they spend less time on trivial syntax errors and focus more on complex business logic. This approach ensures that the machine-generated code matches the quality standards of human developers.

Strict Testing Protocols

Organizations ensure that machine-generated code matches the quality standards of human developers, and they mitigate the risks of algorithmic errors when they expand their test coverage. When developers use ai development tools to write features, they often fail to write the corresponding unit tests. This behavior creates a dangerous gap in product stability. Machine-generated code looks convincing, but it frequently fails edge cases that human engineers naturally anticipate.

Engineering departments enforce rigorous testing protocols to catch these hidden flaws. Developers write tests before they ask the machine to generate the feature logic. This practice forces the generator to adhere to specific behavioral requirements. Furthermore, thorough integration testing verifies that the automated syntax interacts properly with legacy systems. Just as marketers track metrics to improve search visibility for charities, software teams maintain system health when they continuously validate machine outputs against strict test suites.

Engineering Talent Pipeline Crisis

Strict test suites maintain system health, but the over-reliance on code generation threatens the future of the software engineering profession. Many companies attempt to cut costs when they use automation to replace junior staff. This short-sighted strategy caused a severe collapse in entry-level hiring. According to ByteIota, junior developer hiring dropped 73% since 2022, even though overall job postings increased. While executives celebrate immediate payroll savings, they create a strategic vulnerability.

Automation destroys the talent pipeline when it replaces junior engineers. Today's junior developers become tomorrow's senior architects who understand the company's complex legacy systems. If organizations stop hiring beginners, they won't have experienced leaders to manage their infrastructure five years from now. Matt Garman serves as the Chief Executive Officer for Amazon Web Services, and he emphasized this reality when he stated that the replacement of junior developers with automation is one of the dumbest things he has ever heard. Machine generation can't replace the institutional knowledge that human engineers build over time.

Engineering leaders keep entry-level roles and build sustainable mentorship programs. Companies can use automated systems as interactive tutors that help junior developers understand complex codebases faster. These tools can explain unfamiliar syntax and suggest focused improvements during pair-programming sessions. This educational approach works similarly to how organizations build search marketing campaigns for non-profits, where automated analytics guide human strategy. When companies treat automation as a teaching aid, they preserve their talent pipeline and speed up the growth of their junior staff.

Practical Code Review Workflows

Software teams preserve their talent pipeline and establish a cautious approach to code evaluation when they integrate artificial intelligence in software development. Automated generators encourage developers to submit massive blocks of code even when they do not understand the logic. According to Faros AI, teams with high automation adoption experience a 154% increase in pull request size and a 9% increase in bugs per developer. This volume overwhelms reviewers and causes readability problems. In fact, CodeRabbit reports that readability issues spiked more than three times higher in machine-generated contributions compared to human code. Unreviewed oversized submissions quickly make the codebase unmaintainable.

Engineering managers apply a methodical strategy for pull requests to counter this trend. They treat intelligent programming systems as research assistants rather than autonomous developers. Just as digital marketers rely on search engine visibility reports to analyze market trends before they launch campaigns, software engineers use automated analyzers to spot architectural inconsistencies before they merge code. This analysis prevents structural degradation. Teams secure their codebases when they enforce specific review procedures:

-

Developers break large automated submissions into smaller components.

-

Engineers explain the generated business logic during peer reviews.

-

Reviewers reject syntax that lacks clear variable naming or formatting conventions.

-

Automated systems run static analysis tools to verify compliance with company coding standards.

Developers maintain architectural discipline and prevent systemic failures when they treat machine outputs as rough drafts.

Legacy Systems Modernization Without Added Debt

Software teams apply this architectural discipline and use a controlled process to update outdated architectures and prevent system failures. When companies introduce ai development tools, developers prioritize new features over existing code improvements. GitClear analyzed 211 million lines of code and found that refactoring work dropped from 25% to less than 10% between 2021 and 2024. Because developers ignore code maintenance, intelligent programming systems build new logic on top of fragile foundations. This behavior hides unpatched security vulnerabilities within the legacy systems. Consequently, Forrester predicts that 75% of software organizations will face technical debt by 2026 due to machine-generated code.

Engineering departments design a deliberate plan to refactor legacy applications and modernize systems safely. First, software architects map out the existing dependencies and isolate the specific modules that need updates. Next, developers use artificial intelligence in software development to analyze these isolated components and suggest optimized syntax. Engineers avoid replacing the entire architecture at once and apply the suggested changes in small increments. They run comprehensive security scans on each updated module to catch vulnerabilities before the code reaches production. When a machine suggests an insecure library, the automated testing pipeline rejects the deployment. This progression helps companies upgrade their infrastructure, avoid unmanageable technical debt, and maintain essential business operations.

Conclusion

To summarize our discussion about maintaining essential business operations, artificial intelligence in software development accelerates product delivery only when software teams maintain strict quality pipelines. These teams use generation engines as efficient content generators, but the tools cannot replace the architectural discipline of human developers. The teams maintain continuous human oversight to prevent technical debt and ensure long-term product stability. In the future, these teams will likely treat automated code generation as a standard utility rather than an autonomous solution. The deployment of these generation tools requires teams to evaluate current code review workflows and establish rigid quality gates first. Organizations that need expert guidance to implement these systems can explore dedicated AI development services that handle integration architecture and ensure machine learning capabilities align with existing engineering standards from the start.