Introduction

Software engineering has moved past the experimental pilot phase, and artificial intelligence integration now serves as essential infrastructure for companies. Companies adopt this technology rapidly, and a recent survey shows that 90% of enterprises have deployed GitHub Copilot for development tasks. However, these companies produce brittle codebases and compound failures from AI-generated code when they use chatbots but do not change underlying processes. Many organizations struggle with this transition because they assume that software licenses automatically improve code output.

Companies must fundamentally restructure traditional agile workflows to achieve measurable gains. Developers cannot just write code anymore. They must learn to orchestrate agents through strict upfront specifications and rigorous automated testing environments. These developers must set clear boundaries, or models hallucinate variables and produce dead code that requires manual cleanup later. Teams face severe productivity bottlenecks because developers spend more time on machine-generated errors than on original code.

If companies want to maximize return on investment, then they must implement a reliable workflow for AI in application development. This blueprint explains how teams rebuild planning sessions, review criteria, and quality assurance processes to achieve production-grade code safely.

2026 Reality of AI Engineering

Many companies purchase paid licenses for their development teams and expect immediate results. But these companies soon discover that providing model access does not improve code output. A recent survey shows that 92.6% of developers use AI coding assistants, yet their productivity gains remain flat. Companies fail to achieve the expected results because they treat machine learning models as simple autocomplete tools. Organizations must change their workflow if they want to build a modern digital strategy.

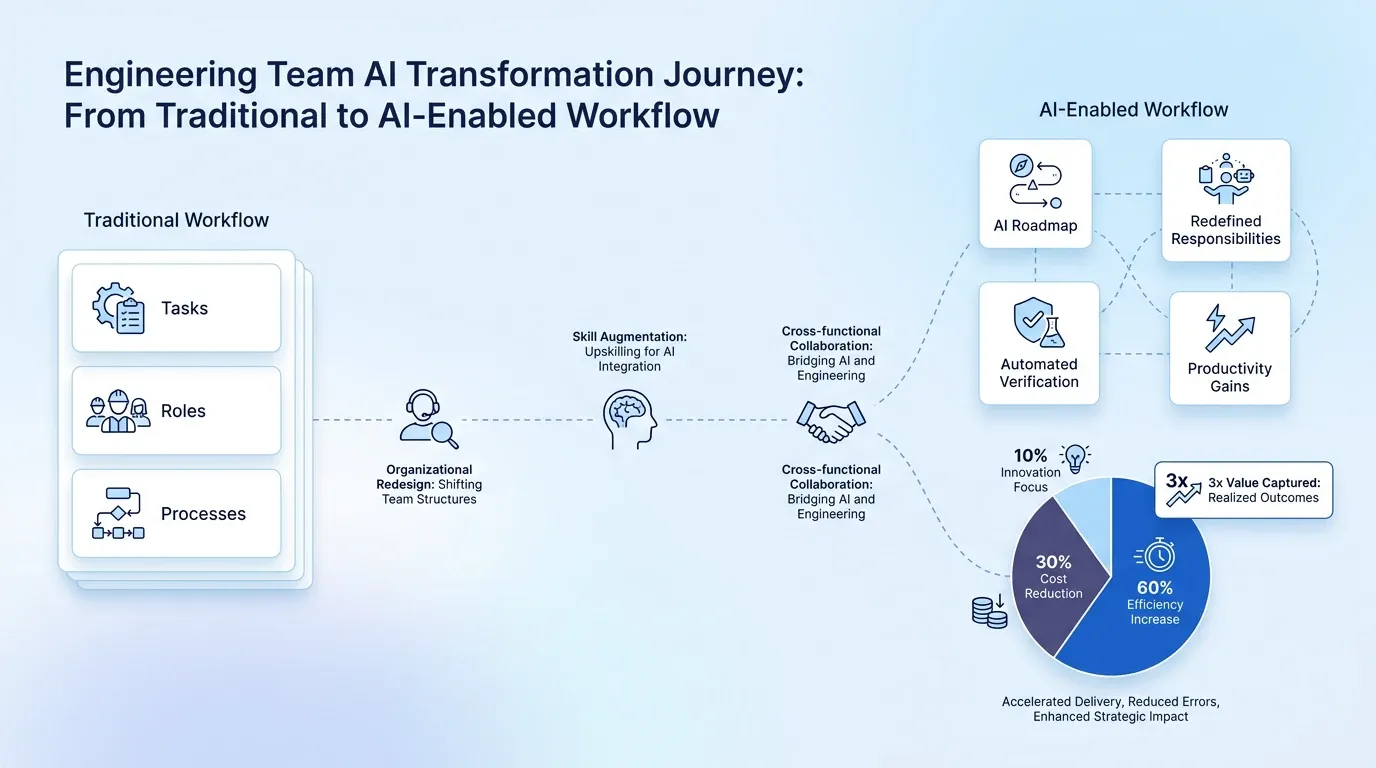

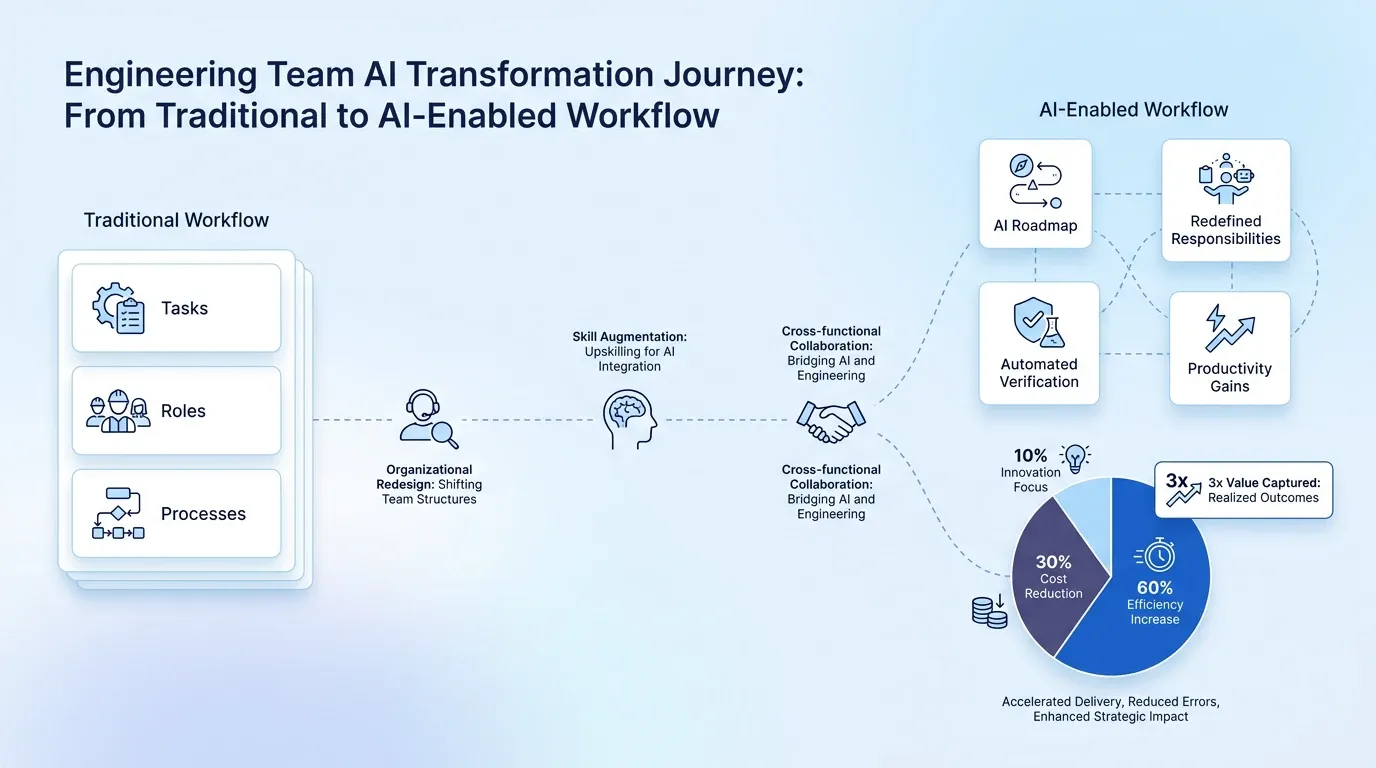

Vendors often promise large speed improvements, but actual productivity gains plateau at 10% rather than the marketed double or triple increases. These flat metrics prove that traditional agile methods do not work with machine-generated code. Teams need a concrete ai implementation roadmap to guide their transition toward reliable systems. They achieve this reliable foundation when they restructure their entire development lifecycle around new operating principles. This restructuring brings clarity to the engineering process and helps teams adopt intelligent software engineering practices. The transition requires time and discipline, but it creates a foundation for measurable growth across the organization. Teams establish this foundation when they change how they plan complex development tasks.

Specification-Driven Development

Complex development tasks expose the limitations of current coding agents. Models attempt to write code for features that take longer than four hours, but their success rates drop quickly. For example, recent evaluations show that the latest models demonstrate a 50% success rate on tasks that require two hours of human expertise. Agents quickly lose track of the original goal and generate useless code because long tasks require more context. Development teams must prioritize problem decomposition as a human skill if they want to prevent these failures.

Engineers need to create detailed architectural plans before they allow agents to generate any code. A structured approach ensures that humans and machines understand the exact boundaries of the project. Teams break large problems into smaller components that agents can digest easily to achieve proper intelligent software engineering. This breakdown serves as the foundation for an effective ai implementation roadmap that brings assurance to the development process.

Effective problem decomposition requires teams to follow specific planning practices:

-

Write upfront specifications for every single feature.

-

Define input and output parameters for each module.

-

Establish explicit data structures before they write business logic.

-

Create thorough acceptance criteria for automated testing.

These practices prevent models from generating hallucinated variables because multi-agent coordination requires specification and task breakdown as a shared source of truth. Software teams must protect this source of truth from machine-generated errors.

Harness Engineering for Application Development

Organizations must wrap their coding agents in tight infrastructure constraints to maintain code quality. Developers call this practice harness engineering. This practice serves as a barrier against machine-generated errors. Engineering teams must configure linting and formatting standards before they execute any code. These upfront configurations prevent models from producing dead code and hallucinated variables that pollute the repository. Developers bring precision to the entire development lifecycle if they establish these proven constraints early.

Proper harness engineering ensures that AI in application development remains a safe and predictable process. Industry predictions indicate that agent reliability requires verification loops rather than prompt engineering alone. A prompt only gives instructions, but a constraint actively stops the model from breaking the system. Teams often apply similar principles when they build automated content workflows that require strict quality gates. Companies eliminate the need for human cleanup later when they force agents to operate within predefined boundaries. Engineers spend more hours fixing broken code than they would spend writing the software from scratch without these boundaries.

Automated Infrastructure Constraints

Development teams automate their linting and formatting rules to enforce architectural boundaries consistently. These automated boundaries force agents to produce compliant code right from the start. Teams waste engineering hours when they rely on manual reviews to catch formatting errors. Strong rules reject non-compliant code immediately, and this immediate rejection trains the agent to follow project standards. Agents sometimes generate code that looks correct but fails under edge cases. For instance, mutation testing reveals that AI-generated code has brittle coverage. Safe software development requires automated constraints to catch these flaws before they reach the main branch. Teams protect their codebases from hidden vulnerabilities if they implement these automated checks. These automated checks become even more critical during complex workflows.

Continuous Verification Loops

Multi-step development processes demand constant verification at every stage of execution. A small error in the first step creates cascading failures that ruin the entire long task without human checkpoints. Current data indicates that agents succeed only 15–20% in compound multi-step workflows when they operate without supervision. A hallucinated variable multiplies across thousands of lines of code and destroys system stability. Engineering teams integrate continuous verification loops into their development strategy to avoid this disaster. These loops provide the certainty that teams need to course-correct the model before the damage becomes irreversible. Human oversight at each step guarantees that the agent remains aligned with the original specification. However, this constant oversight creates new challenges for human developers.

Cognitive Debt Management

Human developers pay a mental toll when they review thousands of lines of machine-generated code. This constant review process creates cognitive debt, and this debt reduces a team's ability to maintain a solid understanding of the architecture. Industry experts warn that rapid code generation creates this debt and forces developers to understand less of their systems. Engineers cannot troubleshoot critical bugs in production if they cannot comprehend the underlying logic. Developers review code in small chunks rather than monolithic pull requests to manage this burden. They also document architectural decisions meticulously to preserve system knowledge for future engineers. This documentation helps new team members learn the system so they do not rely exclusively on machines. Teams support these human engineers when they deploy additional models to audit the generated code.

Multi-Model Review Strategy

Development teams implement a multi-model review strategy to audit generated architecture. These teams use different machine learning models to review the code that primary coding agents produce. A single model often misses its own logical flaws, but a secondary model acts as an independent auditor that catches these mistakes.This layered approach creates an early defense mechanism against errors before a human reviewer even sees the pull request, and teams that already use AI tools in their design and prototyping phase typically adapt to this review model faster. Secondary agents routinely catch hardcoded variables and architectural deviations that slip past standard linting rules.

Engineering teams need this extra verification layer because machine-generated code introduces significant risks. These teams face challenges because recent data indicates that AI-generated pull requests contain 2.74 times more security vulnerabilities than human-written code. Engineering leaders mitigate this risk when they pit models against each other. The primary model writes the code, and the secondary model actively searches for weaknesses. This process mirrors how marketing teams run search optimization campaigns that use different analytical tools to verify results. Engineering teams establish a secure and dependable pipeline when they adopt this cross-validation technique. This technique improves the safety of ai in application development workflows. Consequently, organizations achieve a higher standard of intelligent software engineering without increasing the workload on human reviewers. However, these automated methods still require a final human evaluation.

QA and Human Element

Automated reviews and secondary models do not replace manual testing processes. Coding agents handle boilerplate tasks easily, but they fail to understand human interaction nuances and deep security logic. Human reviewers must validate User Experience and User Interface elements because machines cannot evaluate how an application feels to a customer. Software teams also require human oversight to validate security protocols that protect sensitive data. Just as communication teams manually review digital materials for brand alignment, engineering teams manually review application logic for business alignment.

Senior engineers must lead these validation efforts to ensure product safety. These experienced professionals succeed where junior developers often lack the architectural context that they need to spot subtle flaws in machine-generated systems. Industry reports show that senior engineers dominate AI tool usage because they possess the expertise to validate production code effectively. These experts bring confidence to the development lifecycle when they inspect the final output. They connect the generated components together and verify that the application meets the original specifications. These leaders hold the final authority over what code reaches production, and this oversight ensures proper ai in application development. This strict oversight forces companies to change how their engineering departments operate.

Engineering Team Reorganization

Engineering teams experience fundamental shifts in their operations during successful technology integration. Developers transition from traditional code-writers into system orchestrators who manage machine output. These professionals require a reliable AI implementation roadmap that updates traditional agile ceremonies and sprint planning processes. Teams do not estimate tasks based on typing speed anymore, but they rather base their estimates on the complexity of problem decomposition and specification writing.

Engineering leaders capture value only when they embrace this organizational redesign. Research confirms that organizations that treat AI integration as a structural redesign capture three times more value than companies that simply purchase tools. These leaders build steadfast teams when they change their specific development processes.

Engineering teams implement several sequential changes to restructure their operations for ai in application development:

-

Developers redefine their responsibilities to prioritize system architecture over syntax memorization.

-

Scrum masters adjust sprint planning metrics to account for the time that the team needs to draft upfront specifications.

-

Reviewers update code review criteria to evaluate how well the generated output matches the initial architectural constraints.

-

Engineers establish continuous verification loops to monitor and correct the machine output throughout the sprint.

These operational shifts drive measurable productivity gains across the organization. These changes turn the development lifecycle into an orchestrated process where humans design the boundaries and machines execute the logic safely. This orchestrated process guarantees that teams maintain their engineering rigor.

Conclusion

In summary, software teams maintain engineering rigor, and this practice makes upfront planning and architectural design more vital than ever. The integration of AI in application development doesn't replace human developers, but it forces them to shift their focus toward problem decomposition and strict system verification. If engineering teams implement these new processes on smaller components first, then they can safely roll out augmented workflows across entire enterprise applications. Companies that build proper content generation processes and testing environments secure high returns on their technological investments. Therefore, drafting specification templates today establishes the boundaries that guide coding agents tomorrow.