Introduction

Software teams often treat usability as a future luxury when they build an initial product version under severe budget and technical constraints. Development squads rush to write code, and they postpone design decisions until they secure the next funding round. However, this approach creates flaws in the product architecture. Industry data indicates that 90% of startups fail, and many trace their collapse back to the initial MVP phase. When teams build products without clearly understanding user needs, they launch confusing interfaces that drive away early adopters.

Software teams implement a strategy to reduce risk when they integrate user experience design principles from day one. This integration ensures that early adopters can test the product's value. Foundational design acts as a necessary step to validate market demand rather than an aesthetic choice. When the software behaves predictably, users focus on the solution and do not struggle with navigation. Development teams implement initial usability standards early because structural design fixes drain capital after release. A logical interface ensures that the feedback loop provides useful information about the business hypothesis.

Step 1: Define Core Hypothesis to Prevent Scope Creep

Development teams often struggle to define a single problem statement when they plan new software to test the business hypothesis. Defining a single hypothesis ensures the product addresses actual market needs from the first release. Industry data shows that two-thirds of product-market fit failures occur because companies never find a market. Teams do not succeed when they build complex applications based on assumptions rather than tested hypotheses. Validating a software idea before building the initial product reduces the risk of creating features nobody wants. A clear problem statement provides a solid foundation for development and prevents costly scope creep.

Development squads stay aligned on the primary goal when they focus on one core assumption. Project timelines remain realistic under strict constraints when teams focus on this single goal. Teams apply ux heuristics and user centered design to understand the specific problem the software solves for the target demographic. For example, if developers build a new content creation system, they must test whether creators actually need faster drafting tools before adding unnecessary formatting features. Every additional feature distracts from the main business question.

Integrating foundational user experience design principles early clarifies this validation process. If the core hypothesis proves incorrect, the company saves capital. The team can pivot the strategy without losing months of expensive engineering effort. Clear problem definitions keep the budget focused on validation rather than expansion. A tight focus eliminates the guesswork that usually inflates initial budgets. Teams that test a single hypothesis collect accurate behavioral data from their target audience. This focused approach removes confusion and sets clear expectations for the next development phase.

Step 2: Strip Away Secondary Features from User Flow

In the next development phase, development teams map out the critical user flow to identify the exact steps required to deliver value. Teams must strip away secondary functions after they outline this initial path. Industry research shows that building too many features remains the biggest mistake because creators add them from anxiety rather than validation. Every extra button or menu introduces unnecessary cognitive load on early adopters. A poor interface creates confusing onboarding, broken navigation, and screens that require lengthy explanation to understand.

Early adopters evaluate new software based on its ability to solve a definite problem. If users cannot navigate the interface, they abandon the application before experiencing the solution. Extra features dilute the value proposition and hide the primary function from the target audience. A stripped-down interface provides concrete feedback on the core business concept. When users interact with a single path, their behavior reveals whether the core idea holds actual market value. Teams do not have to wonder which feature caused a user to leave the platform.

Development teams must focus strictly on the primary task. For example, an initial brand-building framework application only needs to collect user inputs and generate a strategy document. Adding social sharing capabilities or community forums at this stage creates harmful distractions. A minimal flow keeps development costs low and speeds up the release cycle. Users provide clearer feedback when they follow a simple and linear path. This simplicity allows engineering teams to identify friction points and improve the software without wasting budget on unnecessary additions.

Step 3: Prioritize System Performance and Response Times

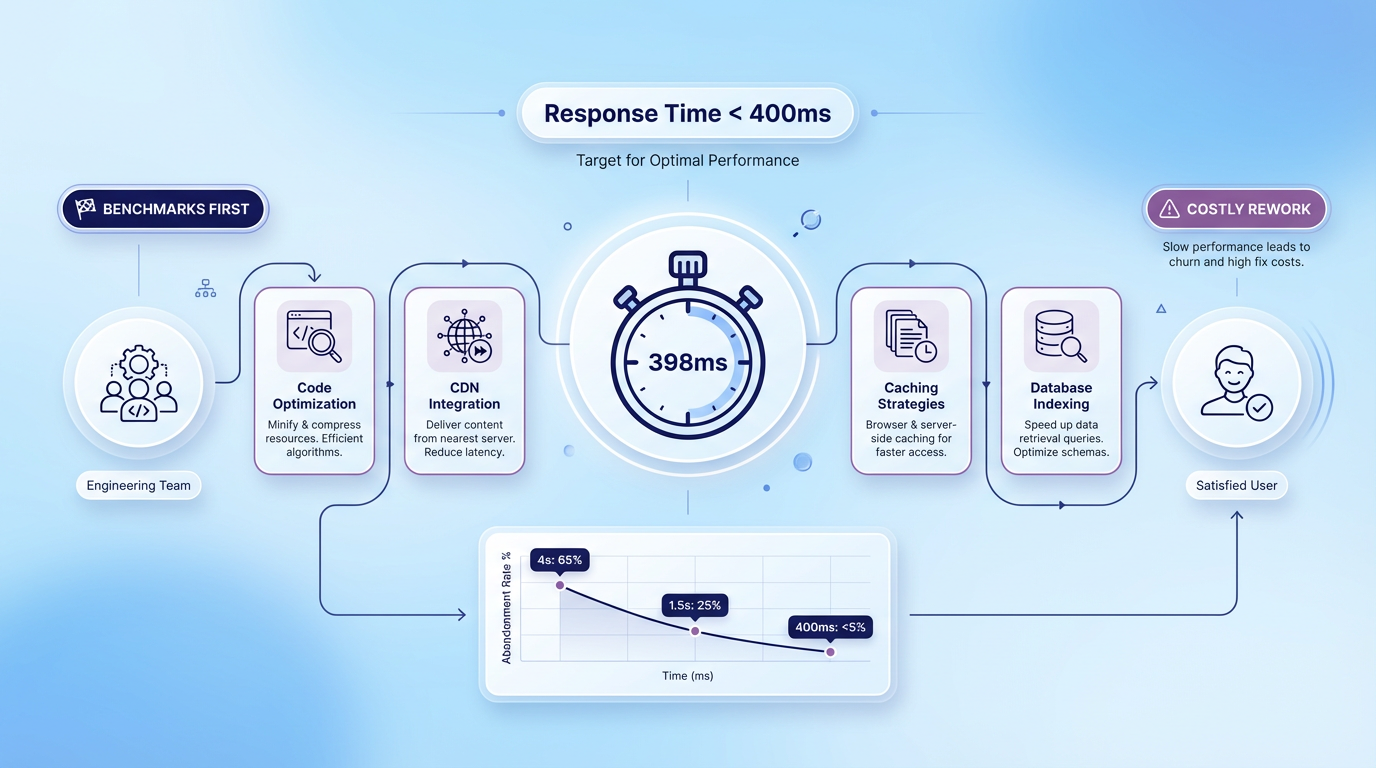

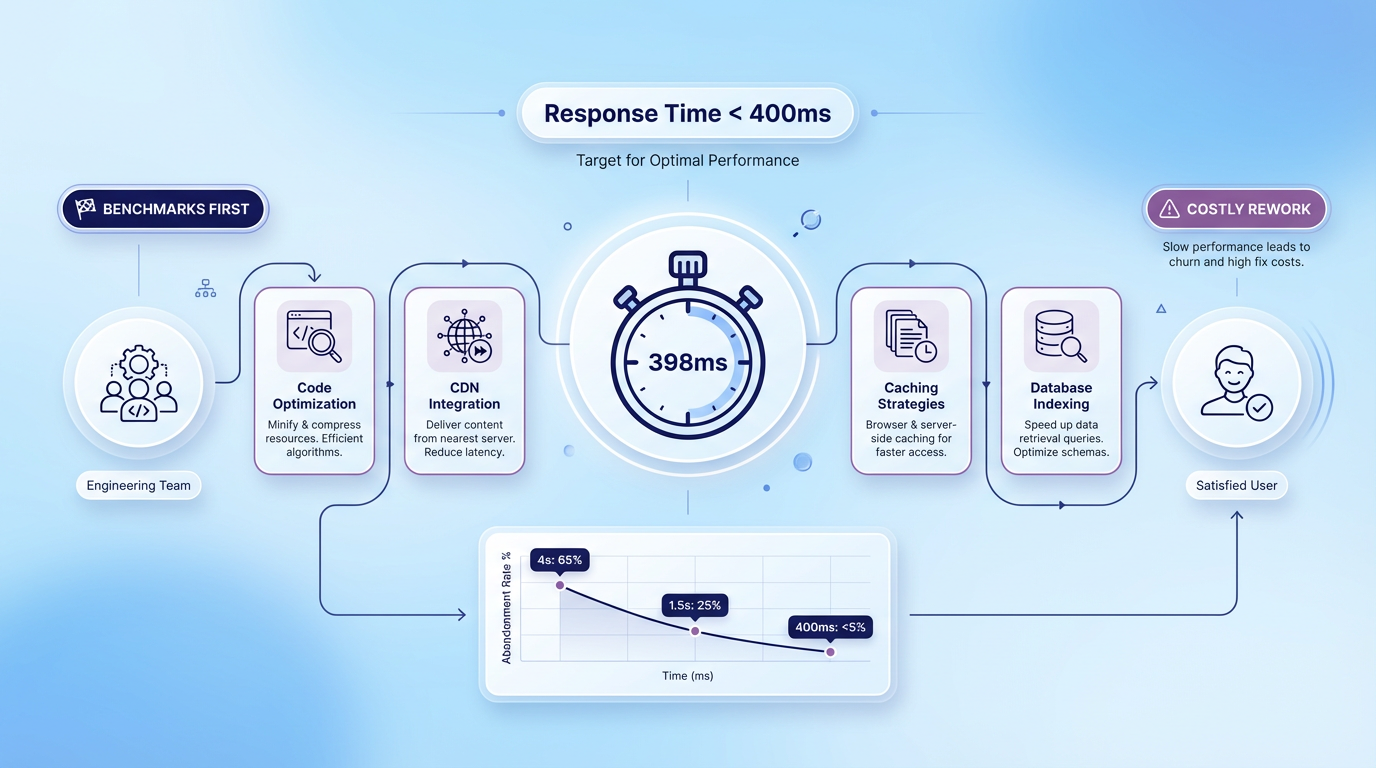

Engineering teams improve the software further when they focus on system performance because speed dictates how people perceive the quality of a new application. Engineers must prioritize response times and system feedback based on the Doherty Threshold. This principle states that productivity soars when a computer and its users interact at a pace under 400 milliseconds. Slow load times break human concentration and cause high abandonment rates.

Recent studies indicate that 40% of people abandon a website if a page takes longer than three seconds to load. Furthermore, 90% of users have stopped using an application due to poor performance. Teams should not write the first line of code before establishing measurable benchmarks for speed. Performance acts as a core usability factor that becomes expensive to retrofit later in the development cycle. Fixing architectural delays after a launch requires rewriting foundational code. This structural rework drains budgets that teams should spend on marketing and growth.

Integrating user experience design principles ensures that the software responds quickly to inputs. A fast application functions as the cornerstone of a complete digital strategy. User centered design respects the user's time and attention. Engineering squads can implement specific tactics to maintain precise performance standards:

-

Optimize database queries to reduce data retrieval delays.

-

Compress media files to accelerate page rendering.

-

Implement loading animations to provide visual feedback during background processes.

-

Minimize third-party scripts that block the main execution thread.

Companies retain more early adopters when they deliver a responsive product. Smooth interactions keep users focused on evaluating the actual business solution instead of waiting for pages to load.

Step 4: Validate Prototype Interactions

Teams evaluate the actual business solution and test software functionality with AI-assisted wireframes and low-code prototype tools before they write any code. A Minimum Viable Product (MVP) functions primarily as a prototype rather than a finished product, and a cross-functional discovery team needs a design lead to create it successfully. When teams understand this distinction, they feel confident about time spent on low-fidelity wireframes. These early mockups allow software creators to validate screen interactions before they commit to expensive custom engineering. If development squads jump straight into code, they often build the wrong features and exhaust their budgets prematurely. Prototype software allows creators to connect screens and simulate the user journey with simple clicks. Test participants navigate these simulated environments, and their actions reveal whether the proposed layout makes sense. Product teams watch how early adopters interact with these digital models and gather immediate feedback about confusing elements. This early test phase saves capital because designers can move a button in a design file in seconds, while engineers need days to rewrite backend logic. Consequently, teams can discard failed concepts cheaply and iterate on successful ideas, and they do not waste engineering resources.

Establish Visual Hierarchy With UX Heuristics

Designers iterate on successful ideas when they apply established rules during the wireframe stage and organize information logically across every screen. Clear visual hierarchy guides users naturally through the interface from their first click to the final interaction. Designers use sizing, contrast, and spacing to highlight the most important actions that test participants need to take. When a page looks structured and predictable, people understand immediately how to achieve their goals. If the interface lacks clear focal points, early adopters click randomly and abandon the task out of frustration. Designers ensure that primary buttons stand out from secondary links. They group related elements together so the layout matches human cognitive patterns. This logical arrangement reduces the mental effort that users require to navigate the application. A well-organized screen keeps users focused on the software core value instead of forcing them to decipher confusing menus.

Test Interactions Before Engineering Costs

Prototype tests with target audiences ensure that users stay focused on the software core value, catch interaction flaws early, and protect the development budget. Product teams make a calculated decision when they apply heuristic rules to evaluate mockups before engineers write code. Industry analysts have established that it costs $1 to fix software defects during the design phase, while it costs more than $100 to address those same issues after customer release. This financial reality makes early prototype tests mandatory for companies that operate under tight constraints. If teams discover a fundamental navigation error during a user interview, they simply update the wireframe. When engineers discover that same error after deployment, they must rewrite code, update databases, and run extensive quality assurance tests. Early error detection during the design phase preserves capital for marketing and future feature expansion. Teams avoid preventable structural mistakes and save their limited funds.

Refine Prototype Through Early Validation

Designers save limited funds when they continuously refine the digital prototype and build a stronger foundation for the final product. Product teams use the feedback from initial test sessions to adjust layouts, simplify workflows, and remove confusing terminology. A verified prototype gives engineers a clear blueprint that eliminates guesswork during the code phase. Early validation confirms that the engineering team builds exactly what the market demands rather than what the creators assume people want. When the company implements user centered design from the beginning, it avoids the trap of isolated software development. Developers understand how the application should behave because they can click through the final prototype themselves. This clarity speeds up the development process and reduces internal debates about specific feature functions.

Step 5: Build Core Architecture With Outcome-Based Teams

After teams speed up the development process and finalize the prototype, companies often partner with an outcome-based software team to build the core architecture. This specific strategy guarantees that technical constraints do not compromise foundational design values while management maintains strict budget control. An experienced external team provides steady progress because they focus on specific business results rather than hourly tasks. These specialized developers prioritize user experience design principles and write clean code that supports smooth interactions and fast load times. Businesses that choose nearshore development benefit from same-day answers and faster defect resolution across similar time zones. This geographical alignment allows internal teams to maintain secure communication channels and monitor the development process daily. Financial data shows that nearshore software development rates average 46% lower than onshore equivalents. This significant cost reduction allows organizations to fund better test protocols and marketing campaigns. The external partner can integrate essential backend services, such as integrated data platforms, and respect the initial funding limits. If a technical challenge arises during database construction, the outcome-based team resolves it without requests for additional budget. This partnership model allows the internal staff to focus on customer acquisition while the external engineers translate the validated prototype into a functional software product.

Step 6: Measure Behavior Against user experience design principles

Internal teams implement a continuous feedback loop when they launch the functional software product to a closed group of early adopters. As technology experts often note, real insights only emerge after product launch in front of users. Development leads measure user behavior to validate the application against established benchmarks. When teams apply proven ux heuristics, they identify exactly where customers struggle with the interface. When companies observe these first interactions, they gather reliable data about how people naturally navigate the system. The team evaluates the product with specific criteria so the software meets baseline usability standards. Project leads usually follow a strict measurement protocol:

-

Track session lengths to determine if users complete their primary tasks efficiently.

-

Monitor drop-off rates on critical screens to identify hidden navigation friction.

-

Record support requests to highlight unclear terms or absent instructions.

-

Compare actual click paths against the intended user flows from the prototype phase.

Teams that practice user centered design analyze this quantitative data to plan their next development cycle. They measure the software performance against standard interface rules and prioritize the most critical bugs. This objective analysis prevents emotional decisions about future feature development. The feedback loop guarantees that every subsequent update directly improves the customer journey.

Conclusion

In summary, software teams need a sequential release process to improve the customer journey, build a usable initial product, and protect the development budget. These teams validate their assumptions effectively when they incorporate user experience design principles from the beginning. They use early product releases as experimental tools to gather behavioral data. They also rely on foundational design to ensure that user feedback focuses on market value rather than confusing navigation. They avoid expensive redesigns and improve their chances of long-term survival when they prioritize system performance and visual hierarchy. They apply these strategic steps and establish the digital trust that helps capture early adopters and grow their operations successfully. Development teams can begin this process when they review their current design workflows and apply these principles to their next project.